Transfer learning, and multi-modality recognition

Introduction

Transfer learning provides techniques for transferring learned knowledge from a source domain to a target domain by finding a mapping between them.

In this paper, we discuss a method for projecting both source and target data to a generalized subspace where each target sample can be represented by some combination of source samples. By employing a low-rank constraint during this transfer, the structure of source and target domains are preserved. This approach has three benefits.

- First, good alignment between the domains is ensured through the use of only relevant data in some subspace of the source domain in reconstructing the data in the target domain.

- Second, the discriminative power of the source domain is naturally passed on to the target domain.

- Third, noisy information will be filtered out in the knowledge transfer.

Extensive experiments on synthetic data, and important computer vision problems such as face recognition application and visual domain adaptation for object recognition demonstrate the superiority of the proposed approach over the existing, well-established methods.

Related Work

- Ming Shao, Carlos Castillo, Zhenghong Gu, and Yun Fu, Low-Rank Transfer Subspace Learning, International Conference on Data Mining (ICDM), pages 1104–1109, 2012. [pdf] [bib]

- Ming Shao, Dmitry Kit, and Yun Fu, Generalized Transfer Subspace Learning through Low-Rank Constraint, International Journal on Computer Vision (IJCV), vol. 109, no. 1-2, pages 74–93, 2014. [pdf] [bib]

- Zhengming Ding, Ming Shao, and Yun Fu, Latent Low-Rank Transfer Subspace Learning for Missing Modality Recognition, AAAI Conference on Artificial Intelligence (AAAI), pages 1192–1198, 2014. [pdf] [bib]

- Zhengming Ding, Ming Shao, and Yun Fu, Missing Modality Transfer Learning via Latent Low-Rank Constraint, IEEE Transactions on Image Processing (TIP), vol. 24, no. 11, pages 4322–4334, 2015. [pdf] [bib]

- Zhengming Ding, Ming Shao, and Yun Fu, Latent Low-Rank Transfer Subspace Learning for Missing Modality Recognition, AAAI Conference on Artificial Intelligence (AAAI), pages 1192–1198, 2014. [pdf] [bib]

- Hongfu Liu, Ming Shao, and Yun Fu, Structure-Preserved Multi-Source Domain Adaptation, IEEE International Conference on Data Mining (ICDM), pages 1059–1064, 2016. [pdf] [bib]

- Ming Shao, Zhengming Ding, Handong Zhao, and Yun Fu, Spectral Bisection Tree Guided Deep Adaptive Exemplar Autoencoder for Unsupervised Domain Adaptation, AAAI Conference on Artificial Intelligence (AAAI), pages 2023–2029, 2016. [pdf] [bib]

Multi-Modality Learning

Introduction

Recent face recognition work has concentrated on different spectral, i.e., near infrared, that can only be perceived by specifically designed device to avoid the illumination problem. This makes great sense in fighting off the lighting factors in face recognition. However, using near infrared for face recognition inevitably introduces a new problem, namely, cross-modality classification. In brief, images registered in the system are in one modality while images that captured momentarily used as the tests are in another modality. In addition, there could be many within-modality variations —pose, and expression — leading to a more complicated problem for the researchers.

To address this problem, we propose a novel framework called hierarchical hyperlingual-words in this work. First, we design a novel structure, called generic hyperlingual-words, to capture the high-level semantics across different modal-ities and within each modality in weakly supervised fashion, meaning only modality pair and variations information are needed in the training. Second, to improve the discriminative power of hyperlingual-words, we propose a novel distance metric thorough the hierarchical structure of hyperlingual-words. Extensive experiments on multi-modality face databases demonstrate the superiority of our method compared to the state-of-the-art works on face recognition tasks subject to pose and expression variations.

Related Work

- Ming Shao, and Yun Fu, Cross-Modality Feature Learning through Generic Hierarchical Hyperlingual-Words, IEEE Transactions on Neural Networks and Learning Systems (TNNLS), vol 28, no. 2, pages 451–463, 2017. [pdf] [bib]

- Ming Shao and Yun Fu, Hierarchical Hyperlingual-Words for Multi-Modality Face Classification, International Conference on Automatic Face and Gesture Recognition (FG), pages 1–6, 2013. [pdf] [bib]

Zero-Shot Learning

Introduction

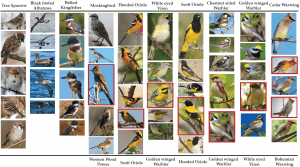

Zero-shot learning for visual recognition has achieved much interest in the most recent years. However, the se-mantic gap across visual features and their underlying se-mantics is still the biggest obstacle in zero-shot learning. To fight off this hurdle, we propose an effective Low-rank Embedded Semantic Dictionary learning (LESD) through ensemble strategy. Specifically, we formulate a novel frame-work to jointly seek a low-rank embedding and seman-tic dictionary to link visual features with their semantic representations, which manages to capture shared features across different observed classes. Moreover, ensemble s-trategy is adopted to learn multiple semantic dictionaries to constitute the latent basis for the unseen classes. Conse-quently, our model could extract a variety of visual char-acteristics within objects, which can be well generalized to unknown categories. Extensive experiments on several zero-shot benchmarks verify that the proposed model can outperform the state-of-the-art approaches.

Related Work

- Zhengming Ding, Ming Shao, and Yun Fu. Low-Rank Embedded Ensemble Semantic Dictionary for Zero-Shot Learning, IEEE International Conference on Computer Vision and Pattern Recognition (CVPR), 2017. [pdf] [bib]